Quantum computing represents one of the most radical departures from the classical computing paradigm ever conceptualized. Harnessing the perplexing principles of quantum mechanics, it promises to revolutionize technology and computation. We introduce you to quantum computing, exploring its fundamental concepts, governing principles, and potential applications.

The Quantum Leap from Classical to Quantum Computing

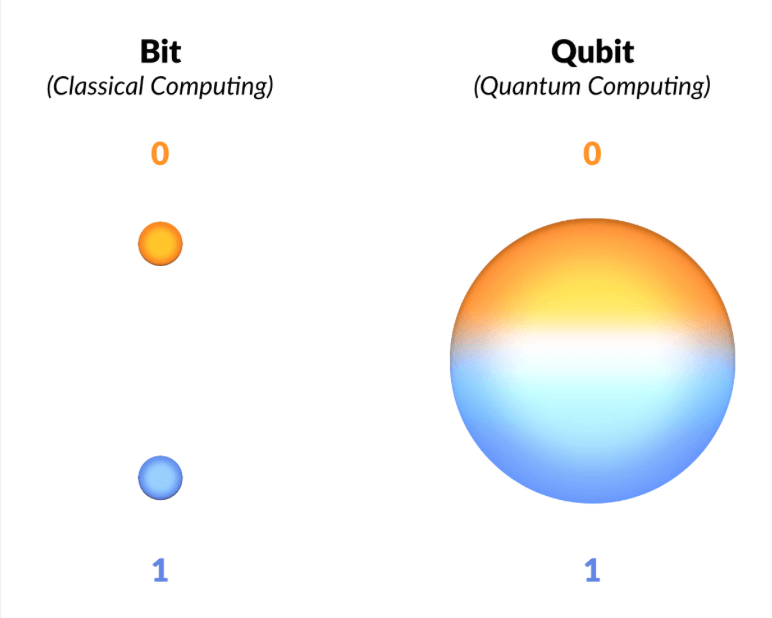

Traditional, or classical, computers use bits as the smallest unit of data, either in a state of 0 or 1. A quantum computer, on the other hand, leverages quantum bits or qubits. Unlike classical bits, qubits can exist in a superposition of states— they can be 0, 1, or both simultaneously.

This oddity arises from quantum mechanics, the branch of physics that describes the behavior of particles at the atomic and subatomic levels. In the quantum realm, particles can exist in multiple states simultaneously, a property called superposition. Moreover, they can be entangled— a phenomenon where the state of one particle is instantly connected to the state of another, regardless of the distance separating them. These two principles— superposition and entanglement— lay the groundwork for quantum computing.

ADVERTISEMENT

Quantum Computing: The Qubits and Quantum Gates

In a quantum computer, information is stored in qubits. Each qubit can hold a complex probability amplitude for both 0 and 1 states, meaning it can exist in a superposition of these states. When measured, however, a qubit collapses to either 0 or 1, with the probability determined by the amplitude.

Furthermore, the operations on qubits are conducted via quantum gates, analogous to the logic gates in classical computers. Quantum gates manipulate qubits through operations like flipping states or entangling two qubits. Unlike classical gates, quantum gates are reversible, meaning they can restore the initial state of qubits. This feature, combined with the ability to process multiple inputs simultaneously due to superposition, allows quantum computers to perform certain calculations exponentially faster than classical computers.

Quantum Computing Principles

Let’s delve deeper into the principles that underpin quantum computing:

ADVERTISEMENT

Superposition

The principle of superposition allows a qubit to exist in multiple states at once. For example, while a classical bit can be either 0 or 1, a qubit can be 0, 1, or any combination thereof. This is akin to spinning a coin: while in motion, the coin is neither entirely ‘heads’ nor ‘tails,’ but some probability of both.

Entanglement

Entanglement is another key quantum principle, which Einstein famously referred to as “spooky action at a distance.” When qubits become entangled, the state of one qubit becomes correlated with the state of another, no matter how far apart they are. If one qubit is altered, the other instantly changes in response. This remarkable feature provides a powerful tool for quantum computing.

Quantum Interference

Quantum interference is a principle that permits the manipulation of superpositions. Just like waves in the sea can constructively or destructively interfere, the same happens with the probability amplitudes of a qubit’s state. Clever use of this phenomenon can cancel out erroneous paths in a calculation and reinforce the correct ones, guiding the quantum algorithm towards the desired solution.

ADVERTISEMENT

Applications of Quantum Computing

The superposition and entanglement of qubits have the potential to solve problems that are currently infeasible for classical computers.

Cryptography

One of the most well-known potential applications of quantum computing is in the field of cryptography. Shor’s algorithm, for instance, can factor large numbers efficiently, a task incredibly time-consuming for classical computers. This ability could break many current encryption schemes, necessitating a shift towards quantum-safe encryption methods. On the other hand, quantum key distribution (QKD) offers a theoretically unbreakable encryption mechanism, enabling ultra-secure communication.

Drug Discovery

Quantum computers could simulate and analyze complex molecular structures, a process currently too computationally intensive for classical computers. Such capabilities could significantly advance drug discovery and material science, leading to the development of new medicines and materials with bespoke properties.

Machine Learning and Big Data

Machine learning algorithms, especially those involving optimization and pattern recognition in large datasets, could be significantly enhanced by quantum computing. Quantum versions of neural networks and support vector machines are being developed that could analyze data exponentially faster than their classical counterparts.

Climate Modeling

The climate system is incredibly complex, with numerous interdependencies and variables. Quantum computers could potentially handle this complexity, offering more accurate climate models and predictions that could help us better understand and combat climate change.

Financial Modeling

The financial world is riddled with complex systems that are currently impossible to accurately model. Quantum computers could revolutionize this field, optimizing trading strategies, managing risk more effectively, and creating more accurate models of financial markets.

The Challenges Ahead

Despite its promise, quantum computing faces several significant hurdles. Maintaining qubits in a superposition state, also known as quantum coherence, is incredibly challenging due to environmental interactions causing decoherence. Also, quantum error correction needs to be robust enough to handle errors that inevitably occur during quantum operations.

Furthermore, designing efficient quantum algorithms is another formidable challenge. Despite notable successes, such as Shor’s and Grover’s algorithms, we are just scratching the surface of what is computationally possible on quantum computers.

Conclusion

Quantum computing is not just a shift in computational capacity but a paradigm shift in how we process information. It promises to transform various fields, from cryptography to drug discovery, climate modeling, and beyond.

However, the road to practical quantum computing is filled with technical and conceptual challenges that researchers around the world are striving to overcome. When they do, we’ll be prepared for a quantum leap into the future, where problems once thought intractable become solvable and where the bounds of computation extend beyond our current imagination.